1. 環境搭建

- 將github庫download下來。

git clone https://github.com/ultralytics/yolov3.git

- 建議在linux環境下使用anaconda進行搭建

conda create -n yolov3 python=3.7

- 安裝需要的軟件

pip install -r requirements.txt

環境要求:

- python >= 3.7

- pytorch >= 1.1

- numpy

- tqdm

- opencv-python

其中只需要注意pytorch的安裝:

到https://pytorch.org/中根據操作系統,python版本,cuda版本等選擇命令即可。

關於深度學習環境搭建請參看:https://www.cnblogs.com/pprp/p/9463974.html

anaconda常用用法:https://www.cnblogs.com/pprp/p/9463124.html

2. 數據集構建

1. xml文件生成需要Labelimg軟件

在Windows下使用LabelImg軟件進行標注,能在網上下載,或者通過github搜索得到。

- 使用快捷鍵:

Ctrl + u 加載目錄中的所有圖像,鼠標點擊Open dir同功能

Ctrl + r 更改默認注釋目標目錄(xml文件保存的地址)

Ctrl + s 保存

Ctrl + d 復制當前標簽和矩形框

space 將當前圖像標記為已驗證

w 創建一個矩形框

d 下一張圖片

a 上一張圖片

del 刪除選定的矩形框

Ctrl++ 放大

Ctrl-- 縮小

↑→↓← 鍵盤箭頭移動選定的矩形框

2. VOC2007 數據集格式

-data

- VOCdevkit2007

- VOC2007

- Annotations (標簽XML文件,用對應的圖片處理工具人工生成的)

- ImageSets (生成的方法是用sh或者MATLAB語言生成)

- Main

- test.txt

- train.txt

- trainval.txt

- val.txt

- JPEGImages(原始文件)

- labels (xml文件對應的txt文件)

通過以上軟件主要構造好JPEGImages和Annotations文件夾中內容,Main文件夾中的txt文件可以通過python腳本生成:

import os

import random

trainval_percent = 0.8

train_percent = 0.8

xmlfilepath = 'Annotations'

txtsavepath = 'ImageSets\Main'

total_xml = os.listdir(xmlfilepath)

num=len(total_xml)

list=range(num)

tv=int(num*trainval_percent)

tr=int(tv*train_percent)

trainval= random.sample(list,tv)

train=random.sample(trainval,tr)

ftrainval = open('ImageSets/Main/trainval.txt', 'w')

ftest = open('ImageSets/Main/test.txt', 'w')

ftrain = open('ImageSets/Main/train.txt', 'w')

fval = open('ImageSets/Main/val.txt', 'w')

for i in list:

name=total_xml[i][:-4]+'\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftrain.write(name)

else:

fval.write(name)

else:

ftest.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

生成labels文件,voc_label.py文件具體內容如下:

# -*- coding: utf-8 -*-

"""

Created on Tue Oct 2 11:42:13 2018

將本文件放到VOC2007目錄下,然后就可以直接運行

需要修改的地方:

1. sets中替換為自己的數據集

2. classes中替換為自己的類別

3. 將本文件放到VOC2007目錄下

4. 直接開始運行

"""

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets=[('2007', 'train'), ('2007', 'val'), ('2007', 'test')] #替換為自己的數據集

classes = ["head", "eye", "nose"] #修改為自己的類別

#classes = ["eye", "nose"]

def convert(size, box):

dw = 1./(size[0])

dh = 1./(size[1])

x = (box[0] + box[1])/2.0 - 1

y = (box[2] + box[3])/2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x*dw

w = w*dw

y = y*dh

h = h*dh

return (x,y,w,h)

def convert_annotation(year, image_id):

in_file = open('VOC%s/Annotations/%s.xml'%(year, image_id)) #將數據集放於當前目錄下

out_file = open('VOC%s/labels/%s.txt'%(year, image_id), 'w')

tree=ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult)==1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))

bb = convert((w,h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for year, image_set in sets:

if not os.path.exists('VOC%s/labels/'%(year)):

os.makedirs('VOC%s/labels/'%(year))

image_ids = open('VOC%s/ImageSets/Main/%s.txt'%(year, image_set)).read().strip().split()

list_file = open('%s_%s.txt'%(year, image_set), 'w')

for image_id in image_ids:

list_file.write('VOC%s/JPEGImages/%s.jpg\n'%(year, image_id))

convert_annotation(year, image_id)

list_file.close()

#os.system("cat 2007_train.txt 2007_val.txt > train.txt") #修改為自己的數據集用作訓練

到底為止,VOC格式數據集構造完畢,但是還需要繼續構造符合darknet格式的數據集(coco)。

需要說明的是:如果打算使用coco評價標准,需要構造coco中json格式,如果要求不高,只需要VOC格式即可,使用作者寫的mAP計算程序即可。

voc的xml轉coco的json文件腳本:xml2json.py

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 28 15:01:03 2018

需要改動xml_path and json_path

"""

#!/usr/bin/python

# -*- coding:utf-8 -*-

# @Description: xml轉換到coco數據集json格式

import os, sys, json,xmltodict

from xml.etree.ElementTree import ElementTree, Element

from collections import OrderedDict

XML_PATH = "/home/learner/datasets/VOCdevkit2007/VOC2007/Annotations/test"

JSON_PATH = "./test.json"

json_obj = {}

images = []

annotations = []

categories = []

categories_list = []

annotation_id = 1

def read_xml(in_path):

'''讀取並解析xml文件'''

tree = ElementTree()

tree.parse(in_path)

return tree

def if_match(node, kv_map):

'''判斷某個節點是否包含所有傳入參數屬性

node: 節點

kv_map: 屬性及屬性值組成的map'''

for key in kv_map:

if node.get(key) != kv_map.get(key):

return False

return True

def get_node_by_keyvalue(nodelist, kv_map):

'''根據屬性及屬性值定位符合的節點,返回節點

nodelist: 節點列表

kv_map: 匹配屬性及屬性值map'''

result_nodes = []

for node in nodelist:

if if_match(node, kv_map):

result_nodes.append(node)

return result_nodes

def find_nodes(tree, path):

'''查找某個路徑匹配的所有節點

tree: xml樹

path: 節點路徑'''

return tree.findall(path)

print ("-----------------Start------------------")

xml_names = []

for xml in os.listdir(XML_PATH):

#os.path.splitext(xml)

#xml=xml.replace('Cow_','')

xml_names.append(xml)

'''xml_path_list=os.listdir(XML_PATH)

os.path.split

xml_path_list.sort(key=len)'''

xml_names.sort(key=lambda x:int(x[:-4]))

new_xml_names = []

for i in xml_names:

j = 'Cow_' + i

new_xml_names.append(j)

#print xml_names

#print new_xml_names

for xml in new_xml_names:

tree = read_xml(XML_PATH + "/" + xml)

object_nodes = get_node_by_keyvalue(find_nodes(tree, "object"), {})

if len(object_nodes) == 0:

print (xml, "no object")

continue

else:

image = OrderedDict()

file_name = os.path.splitext(xml)[0]; # 文件名

para1 = file_name + ".jpg"

height_nodes = get_node_by_keyvalue(find_nodes(tree, "size/height"), {})

para2 = int(height_nodes[0].text)

width_nodes = get_node_by_keyvalue(find_nodes(tree, "size/width"), {})

para3 = int(width_nodes[0].text)

fname=file_name[4:]

para4 = int(fname)

for f,i in [("file_name",para1),("height",para2),("width",para3),("id",para4)]:

image.setdefault(f,i)

#print(image)

images.append(image) #構建images

name_nodes = get_node_by_keyvalue(find_nodes(tree, "object/name"), {})

xmin_nodes = get_node_by_keyvalue(find_nodes(tree, "object/bndbox/xmin"), {})

ymin_nodes = get_node_by_keyvalue(find_nodes(tree, "object/bndbox/ymin"), {})

xmax_nodes = get_node_by_keyvalue(find_nodes(tree, "object/bndbox/xmax"), {})

ymax_nodes = get_node_by_keyvalue(find_nodes(tree, "object/bndbox/ymax"), {})

for index, node in enumerate(object_nodes):

annotation = {}

segmentation = []

bbox = []

seg_coordinate = [] #坐標

seg_coordinate.append(int(xmin_nodes[index].text))

seg_coordinate.append(int(ymin_nodes[index].text))

seg_coordinate.append(int(xmin_nodes[index].text))

seg_coordinate.append(int(ymax_nodes[index].text))

seg_coordinate.append(int(xmax_nodes[index].text))

seg_coordinate.append(int(ymax_nodes[index].text))

seg_coordinate.append(int(xmax_nodes[index].text))

seg_coordinate.append(int(ymin_nodes[index].text))

segmentation.append(seg_coordinate)

width = int(xmax_nodes[index].text) - int(xmin_nodes[index].text)

height = int(ymax_nodes[index].text) - int(ymin_nodes[index].text)

area = width * height

bbox.append(int(xmin_nodes[index].text))

bbox.append(int(ymin_nodes[index].text))

bbox.append(width)

bbox.append(height)

annotation["segmentation"] = segmentation

annotation["area"] = area

annotation["iscrowd"] = 0

fname=file_name[4:]

annotation["image_id"] = int(fname)

annotation["bbox"] = bbox

cate=name_nodes[index].text

if cate=='head':

category_id=1

elif cate=='eye':

category_id=2

elif cate=='nose':

category_id=3

annotation["category_id"] = category_id

annotation["id"] = annotation_id

annotation_id += 1

annotation["ignore"] = 0

annotations.append(annotation)

if category_id in categories_list:

pass

else:

categories_list.append(category_id)

categorie = {}

categorie["supercategory"] = "none"

categorie["id"] = category_id

categorie["name"] = name_nodes[index].text

categories.append(categorie)

json_obj["images"] = images

json_obj["type"] = "instances"

json_obj["annotations"] = annotations

json_obj["categories"] = categories

f = open(JSON_PATH, "w")

#json.dump(json_obj, f)

json_str = json.dumps(json_obj)

f.write(json_str)

print ("------------------End-------------------")

(運行bash yolov3/data/get_coco_dataset.sh,仿照格式將數據放到其中)

但是這個庫還需要其他模型:

3. 創建*.names file,

其中保存的是你的所有的類別,每行一個類別,如data/coco.names:

head

eye

nose

4. 更新data/coco.data,其中保存的是很多配置信息

classes = 3 # 改成你的數據集的類別個數

train = ./data/2007_train.txt # 通過voc_label.py文件生成的txt文件

valid = ./data/2007_test.txt # 通過voc_label.py文件生成的txt文件

names = data/coco.names # 記錄類別

backup = backup/ # 記錄checkpoint存放位置

eval = coco # 選擇map計算方式

5. 更新cfg文件,修改類別相關信息

打開cfg文件夾下的yolov3.cfg文件,大體而言,cfg文件記錄的是整個網絡的結構,是核心部分,具體內容講解請見:https://pprp.github.io/2018/09/20/tricks.html

只需要更改每個[yolo]層前邊卷積層的filter個數即可:

每一個[region/yolo]層前的最后一個卷積層中的 filters=預測框的個數(mask對應的個數,比如mask=0,1,2, 代表使用了anchors中的前三對,這里預測框個數就應該是3*(classes+5) ,5的意義是4個坐標+1個置信度代表這個格子含有目標的概率,也就是論文中的tx,ty,tw,th,po

舉個例子:我有三個類,n = 3, 那么filter = 3x(n+5) = 24

[convolutional]

size=1

stride=1

pad=1

filters=255 # 改為 24

activation=linear

[yolo]

mask = 6,7,8

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=80 # 改為 3

num=9

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

6. 數據集格式說明

- yolov3

- data

- 2007_train.txt

- 2007_test.txt

- coco.names

- coco.data

- annotations(json files)

- images(將2007_train.txt中的圖片放到train2014文件夾中,test同理)

- train2014

- 0001.jpg

- 0002.jpg

- val2014

- 0003.jpg

- 0004.jpg

- labels(voc_labels.py生成的內容需要重新組織一下)

- train2014

- 0001.txt

- 0002.txt

- val2014

- 0003.txt

- 0004.txt

- samples(存放待測試圖片)

2007_train.txt內容示例:

/home/dpj/yolov3-master/data/images/val2014/Cow_1192.jpg

/home/dpj/yolov3-master/data/images/val2014/Cow_1196.jpg

.....

注意images和labels文件架構一致性,因為txt是通過簡單的替換得到的:

images -> labels

.jpg -> .txt

3. 訓練模型

預訓練模型:

- Darknet

*.weightsformat: https://pjreddie.com/media/files/yolov3.weights - PyTorch

*.ptformat: https://drive.google.com/drive/folders/1uxgUBemJVw9wZsdpboYbzUN4bcRhsuAI

開始訓練:

python train.py --data data/coco.data --cfg cfg/yolov3.cfg

如果日志正常輸出那證明可以運行了

如果中斷了,可以恢復訓練

python train.py --data data/coco.data --cfg cfg/yolov3.cfg --resume

4. 測試模型

將待測試圖片放到data/samples中,然后運行

python detect.py --weights weights/best.pt

5. 評估模型

python test.py --weights weights/latest.pt

如果使用cocoAPI使用以下命令:

git clone https://github.com/cocodataset/cocoapi && cd cocoapi/PythonAPI && make && cd ../.. && cp -r cocoapi/PythonAPI/pycocotools yolov3

cd yolov3

python3 test.py --save-json --img-size 416

Namespace(batch_size=32, cfg='cfg/yolov3-spp.cfg', conf_thres=0.001, data_cfg='data/coco.data', img_size=416, iou_thres=0.5, nms_thres=0.5, save_json=True, weights='weights/yolov3-spp.weights')

Using CUDA device0 _CudaDeviceProperties(name='Tesla V100-SXM2-16GB', total_memory=16130MB)

Class Images Targets P R mAP F1

Calculating mAP: 100%|█████████████████████████████████████████| 157/157 [05:59<00:00, 1.71s/it]

all 5e+03 3.58e+04 0.109 0.773 0.57 0.186

Average Precision (AP) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.335

Average Precision (AP) @[ IoU=0.50 | area= all | maxDets=100 ] = 0.565

Average Precision (AP) @[ IoU=0.75 | area= all | maxDets=100 ] = 0.349

Average Precision (AP) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.151

Average Precision (AP) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.360

Average Precision (AP) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.493

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 1 ] = 0.280

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 10 ] = 0.432

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.458

Average Recall (AR) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.255

Average Recall (AR) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.494

Average Recall (AR) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.620

python3 test.py --save-json --img-size 608 --batch-size 16

Namespace(batch_size=16, cfg='cfg/yolov3-spp.cfg', conf_thres=0.001, data_cfg='data/coco.data', img_size=608, iou_thres=0.5, nms_thres=0.5, save_json=True, weights='weights/yolov3-spp.weights')

Using CUDA device0 _CudaDeviceProperties(name='Tesla V100-SXM2-16GB', total_memory=16130MB)

Class Images Targets P R mAP F1

Computing mAP: 100%|█████████████████████████████████████████| 313/313 [06:11<00:00, 1.01it/s]

all 5e+03 3.58e+04 0.12 0.81 0.611 0.203

Average Precision (AP) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.366

Average Precision (AP) @[ IoU=0.50 | area= all | maxDets=100 ] = 0.607

Average Precision (AP) @[ IoU=0.75 | area= all | maxDets=100 ] = 0.386

Average Precision (AP) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.207

Average Precision (AP) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.391

Average Precision (AP) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.485

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 1 ] = 0.296

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 10 ] = 0.464

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.494

Average Recall (AR) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.331

Average Recall (AR) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.517

Average Recall (AR) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.618

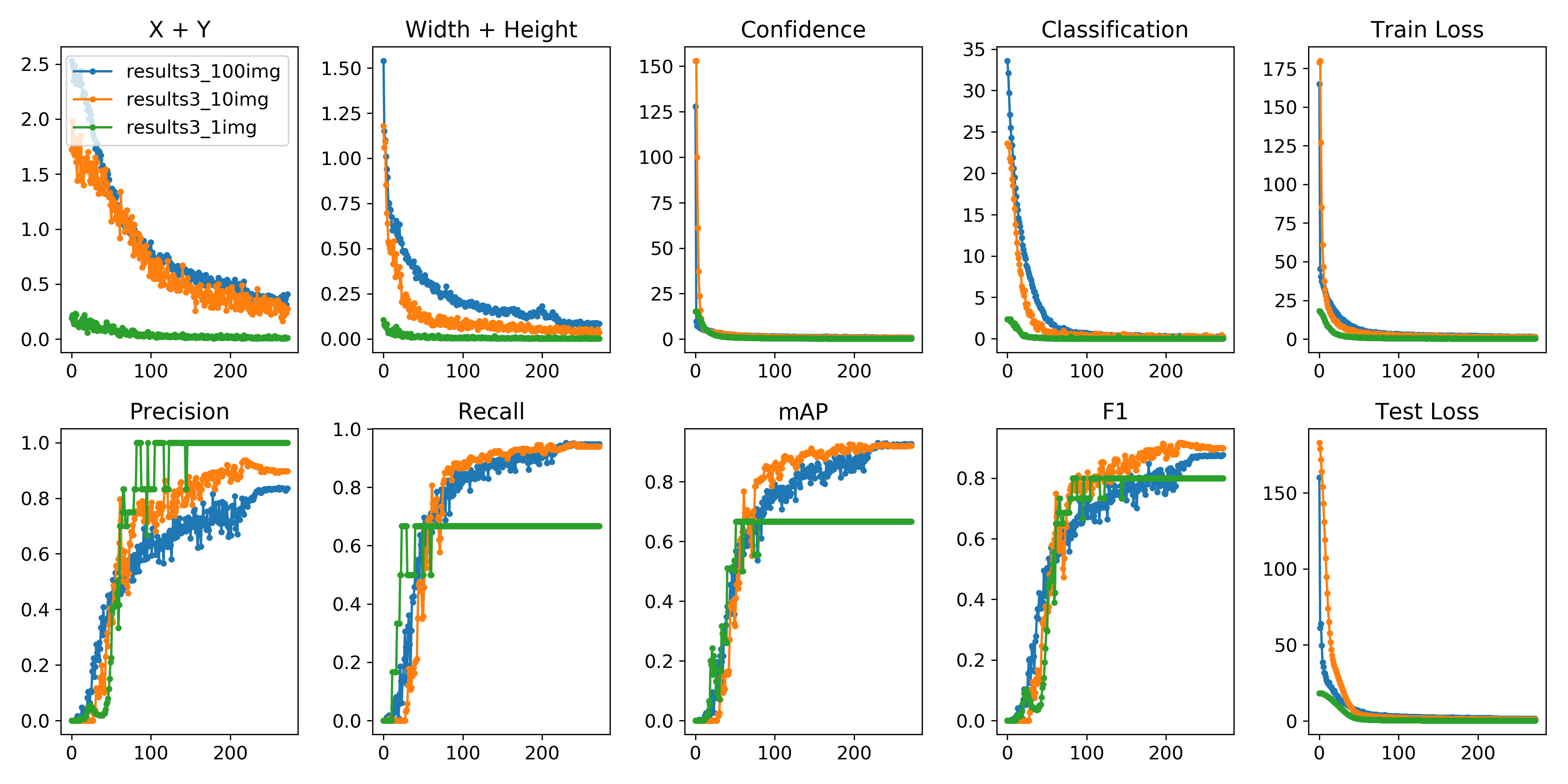

6. 可視化

可以使用python -c from utils import utils;utils.plot_results()

創建drawLog.py

def plot_results():

# Plot YOLO training results file 'results.txt'

import glob

import numpy as np

import matplotlib.pyplot as plt

#import os; os.system('rm -rf results.txt && wget https://storage.googleapis.com/ultralytics/results_v1_0.txt')

plt.figure(figsize=(16, 8))

s = ['X', 'Y', 'Width', 'Height', 'Objectness', 'Classification', 'Total Loss', 'Precision', 'Recall', 'mAP']

files = sorted(glob.glob('results.txt'))

for f in files:

results = np.loadtxt(f, usecols=[2, 3, 4, 5, 6, 7, 8, 17, 18, 16]).T # column 16 is mAP

n = results.shape[1]

for i in range(10):

plt.subplot(2, 5, i + 1)

plt.plot(range(1, n), results[i, 1:], marker='.', label=f)

plt.title(s[i])

if i == 0:

plt.legend()

plt.savefig('./plot.png')

if __name__ == "__main__":

plot_results()

7. 高級進階-網絡結構更改

詳細cfg文件講解:https://pprp.github.io/2018/09/20/YOLO cfg文件解析/

參考資料以及網絡更改經驗:https://pprp.github.io/2019/06/20/YOLO經驗總結/

歡迎在評論區進行討論,也便於我繼續完善該教程。

ps: 最近寫了一個一鍵生成腳本,可以直接將VOC2007數據格式轉換為U版yolov3要求的格式,地址在這里:https://github.com/pprp/voc2007_for_yolo_torch

ps: 如何添加注意力機制?https://www.cnblogs.com/pprp/p/12241054.html 這是《從零開始學習YOLOv3》系列教程的第7篇,剩余的可以關注GiantPandaCV公眾號查看歷史文章,或者直接翻閱筆者之前的歷史文章。更多注意力機制模塊和即插即用模塊可以訪問:https://github.com/pprp/SimpleCVReproduction/tree/master/Plug-and-play module,歡迎star

YOLOv4出來了,點擊這篇文章查看筆者總結的YOLOv4梳理。